Products & Technologies

Products & Technologies

Services

Resources

Posted

June 20, 2018

Stay updated on our content.

Why AI Workloads Require New Computing Architectures – Part 1

Jun 20, 2018

I enjoyed presenting to investors at the New Street Research Accelerated Computing conference hosted by Pierre Ferragu on May 30 in New York. For those that were unable to attend, I am summarizing my presentation in two connected blog posts, of which this is the first one. The two blog posts correspond to the two parts of my presentation. I hope this summary will help prepare you to attend the upcoming AI Design Forum being hosted by Applied Materials and SEMI on July 10 in San Francisco, as a new track within SEMICON West.

Background

As we first introduced last year in July, then followed up at our analyst day in September, and on investor conference calls since then, our thesis at Applied Materials is that there will be an explosion of data generation from a variety of new categories of devices, most of which do not even exist yet. Data are valuable, because artificial intelligence (AI) can turn data into commercial insights. To realize AI we are going to have to enable new computing models. So let’s start there.

Key Messages

I have two messages for you. First, AI workloads (i.e. machine learning, deep learning) require a new way to process data — we call this new computing architectures (aka computing models). I will illustrate what “computing architecture” means and what types of changes are required for AI workloads. Second, AI computing architectures require materials engineering breakthroughs. I’ll discuss some examples of the types of breakthroughs we are referring to. At Applied Materials we are excited because we foresee AI driving a large growth opportunity for materials engineering.

In this blog post, my goal is to summarize how the computing architecture requirements for AI workloads are different from what the industry has been familiar with for a couple of decades, in the form of traditional computing architectures such as x86 or ARM. I will discuss why traditional Von Neumann computing architectures are not sufficient for AI. I’ll describe an empirical analysis that we did to illustrate that if we don’t collectively enable new computing architectures, AI will become unaffordable.

What’s uniquely different about AI workloads?

There are three big differences, and they are inter-related.

First, AI needs a lot of memory — because the most popular AI workloads operate on a lot of data. But the memory also needs to be organized differently. Traditional multi-level cache architectures used in popular CPUs are not necessary for AI. AI requires direct and faster access to memory. There is not as much focus on reusing data by storing it in caches.

There is a lot of emphasis on feeding abundant data into an AI system. Take Google Translate™ translation service for example — when they did this back in 2010, they hired linguists and algorithm experts to implement the translation from English to Chinese. In the end they got 70 percent accuracy with the translation. That’s good but not great. Then more recently, they took a different approach; they hired a lot of data scientists. The data scientists fed each webpage available in English and its translation in Chinese to a relatively straightforward deep-learning algorithm. This gave them much better results with 98 percent accuracy. As you can see, the focus here is on using a lot more data with a simpler algorithm. This is the argument in favor of powering AI with lots of data.

Second, AI involves a lot of parallel computing. Parallel computing means that you can work on different parts of the workload in parallel without worrying about inter-dependence. Think of image processing for example. As you can imagine, it is possible to work on different parts of the image in parallel and piece together the image in the end. So again, all the pipelining complexity provided in traditional CPUs is not needed for AI.

Third, AI needs lots of low-precision computing, whether it is floating point or integer. This is the power of neural networks, which are at the heart of machine learning or deep learning. Traditional CPUs have 64 bits of precision going all the way up to 512 bits in some cases. AI does not really need that for the most part.

So what we have here are three fundamental and significant computing architecture changes needed for AI workloads. This brings us to the topic of homogeneous versus heterogeneous computing architecture.

Homogeneous vs. Heterogeneous Computing

In the PC and mobile eras, the majority of applications (or workloads) looked similar in terms of their requirements for processing (i.e. computing architecture). Initially all the workloads were handled by CPUs. Then as we started using more pictures, videos and gaming, we started using GPUs.

In the future, our workloads are going to look increasingly dissimilar, with each one having its own requirements for computing. What will be needed is a variety of different architectures, each optimized for a particular type of workload. This is what we refer to as a “renaissance of hardware,” because it is driving architecture innovation for a variety of such new workloads.

There is one more reason why the industry is going from homogeneous to heterogeneous computing. It has to do with power density, which is a limiter for improving the performance of traditional CPUs. We are at a point where performance improvements with contemporary multi-core CPU architectures have become difficult to achieve. What’s fundamentally needed for AI workloads is higher power efficiency (i.e. power consumed per operation). With the end of Dennard Scaling, the only way to do this is to build domain-specific or workload-specific architectures that fundamentally improve computational efficiency.

Empirical Analysis — DRAM and NAND Shipments Correlated with Data Generation

To understand the relationship between data generation and computing requirements, we compared annual DRAM and NAND shipments with annual data generation. The empirical relationships we found suggest that DRAM and NAND shipments both grow faster than data generation. The mathematical relationships imputed in our analysis are representative of the underlying computing architecture.

We did a thought experiment using the empirical relationships we found. We considered the effect of adding in data generation from one percent adoption of smart cars (L4/L5). Assuming each smart car generates about 4TB of data per day, we found that total data generated increases by 5x relative to ex-smart car levels in 2020.

According to this analysis, using the traditional computing model, we would need 8x the installed capacity of DRAM (in 2020) to handle one percent adoption of smart cars, and 25x the installed capacity of NAND. At Applied Materials we would absolutely love for that to happen, but we don’t think it will. Instead, the industry will need to adopt new types of memory based on new materials and 3D design techniques, and new computing architectures.

This brings us to the conclusion of the part one summary of my presentation from the New Street Research Accelerated Computing conference. Traditional Von Neumann computing architectures are not going to be economical or even feasible for handling the data deluge required for AI. New computing architectures are needed. Stay tuned for part two, to read about the role materials engineering will play in enabling new computing architectures for AI.

Google Translate is a trademark of Google LLC.

Tags: artificial intelligence, AI, iot, Big Data, computing architecture, AI Design Forum

Sundeep Bajikar

Corporate Vice President, Corporate Strategy and Marketing

Sundeep is Head of Corporate Strategy and Marketing (CSM) and guides the executive leadership team in the development of the company's growth thesis, strategic priorities, key initiatives and investor communications. He is also responsible for developing Applied’s marketing capabilities and community. Sundeep’s work served as the foundation for Applied’s strategies related to AI, ICAPS, Heterogenous Integration and Net Zero.

He joined Applied in 2017 after spending ten years as a Senior Equity Research Analyst covering global technology stocks, including Apple and Samsung Electronics, for Morgan Stanley and Jefferies. Previously he worked for a decade as a researcher, ASIC design engineer, system architect and strategic planning manager at Intel Corporation.

Sundeep holds an MBA in finance from The Wharton School and M.S. degrees in electrical engineering and mechanical engineering from the University of Minnesota. He holds 13 U.S. and international patents with more than 30 additional patents pending. Sundeep is also author of a book titled, “Equity Research for the Technology Investor – Value Investing in Technology Stocks.” He was an Institutional Investor Rising Star.

Adding Sustainability to the Definition of Fab Performance

To enable a more sustainable semiconductor industry, new fabs must be designed to maximize output while reducing energy consumption and emissions. In this blog post, I examine Applied Materials’ efforts to drive fab sustainability through the process equipment we develop for chipmakers. It all starts with an evolution in the mindset of how these systems are designed.

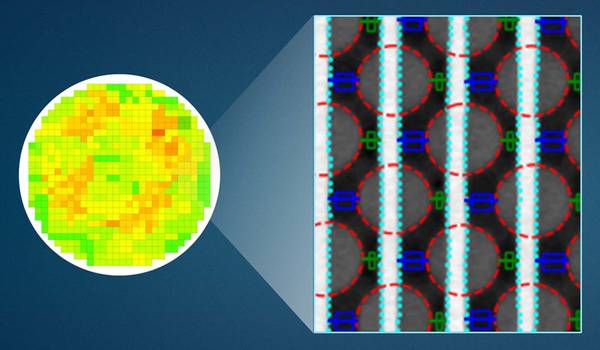

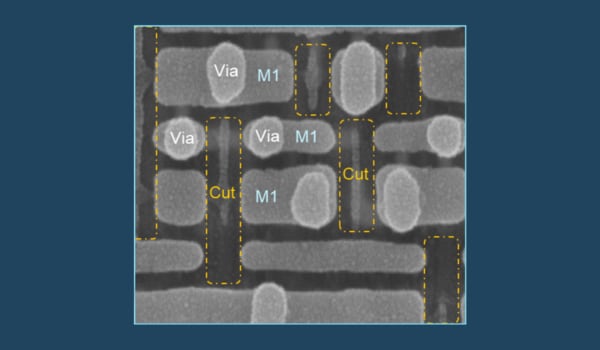

Innovations in eBeam Metrology Enable a New Playbook for Patterning Control

The patterning challenges of today’s most advanced logic and memory chips can be solved with a new playbook that takes the industry from optical target-based approximation to actual, on-device measurements; limited statistical sampling to massive, across-wafer sampling; and single-layer patterning control to integrative multi-layer control. Applied’s new PROVision® 3E system is designed to enable this new playbook.

Breakthrough in Metrology Needed for Patterning Advanced Logic and Memory Chips

As the semiconductor industry increasingly moves from simple 2D chip designs to complex 3D designs based on multipatterning and EUV, patterning control has reached an inflection point. The optical overlay tools and techniques the semiconductor industry traditionally used to reduce errors are simply not precise enough for today’s leading-edge logic and memory chips.