熱門話題

Applied Materials Receives Intel EPIC Supplier Award

Applied Materials Accelerates Materials Simulation for the AI Era in Collaboration with NVIDIA

Applied Materials Raises Quarterly Cash Dividend by 15 Percent

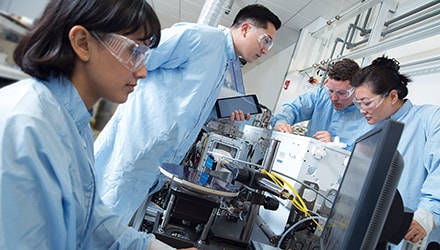

Applied Materials and SK hynix Announce Long-Term R&D Partnership to Accelerate AI Memory Innovation at EPIC Center in Silicon Valley